Difference between revisions of "Affective Computing"

Caseorganic (Talk | contribs) |

Caseorganic (Talk | contribs) |

||

| Line 18: | Line 18: | ||

===Related Reading=== | ===Related Reading=== | ||

*[[Media Lab at MIT]] | *[[Media Lab at MIT]] | ||

| + | *[[Kelly Dobson]] | ||

[[Category:Book Pages]] | [[Category:Book Pages]] | ||

[[Category:Unfinished]] | [[Category:Unfinished]] | ||

Revision as of 17:14, 12 February 2011

Definition

Developing computing architectures that account for human issues such as usability, touch, access, persona; characteristics, emotion and background. Those who build systems by these principles think of computing as a solution or a helper for problems or essences of human living, especially in an industrial world.

"Plutowski (2000) identifies three broad categories of research within the area of affective or emotional computing,: emotional expression programs that display and communicate simulated emotional affect; emotional detection programs that recognize and react to emotional expression in humans; and emotional action tendencies, instilling computer programs with emotional processes in order to make them more efficient and effective. Thus specific projects at MIT (www.media.mit.edu/affect) have included the development of affective wearables such as affective jewelry, expression glasses, and a conductors jacket to designed to extend a conductor's ability to express emotion and intentionally to the audience and to the orchestra." (Plutowski 2000, in Knowing capitalism By N. J. Thrift. SAGE, 2005 - Business & Economics - 256 pages).

"computational systems with the ability to sense, recognize, understand and respond to human moods and emotions . Picard (1997; 2000) argues that to be truly interactive computers must have the power to recognize, feel and express emotion".

Learning the Language of Machines

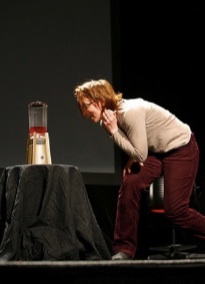

Instead of teaching machines to understand humans, MIT’s Kelly Dobson programmed a blender to understand voice activation, but not the typical voice one uses. Instead of saying “Blender, ON!”, she made an auditory model of a machine voice.

If she wants the blender to begin, she simply growls at it. The low-pitched “Rrrrrrrrr” she makes turns the blender on low. If she wants to increase the speed of the machine, she increases her voice to “RRRRRRRRRRR!”, and the machine increases in intensity. This way, the machine can understand volume and velocity, instead of a human voice. Why would a machine need to understand a human command when it can understand a command much more similar to its own human language?